Last Update: February 5, 2025

BY eric

eric

Keywords

Using Stable Diffusion WebUI Docker for Image Generation – A Newbie's Guide

Introduction

Stable Diffusion is a powerful open-source AI model designed to generate high-quality images from text prompts. However, setting up a local environment with different UI options can be complex. Stable Diffusion WebUI Docker simplifies this process by offering a containerized solution that integrates multiple user interfaces for seamless image generation.

While it’s possible to generate images directly via the command line, this method is less intuitive and requires technical expertise. To make Stable Diffusion more accessible, various user interfaces (UIs) for stable diffusion have been developed, providing a more user-friendly experience.

Stable Diffusion WebUI Docker brings these UIs together in a single container, making installation and usage more convenient. This article will guide you through installing, configuring, and using Stable Diffusion WebUI Docker to streamline your image generation workflow.

How UIs Work with Stable Diffusion

A UI acts as a frontend that communicates with Stable Diffusion's backend model. The backend performs image generation using deep learning, while the UI provides an easy way to input text prompts, adjust settings, and view results.

Popular Stable Diffusion UIs

Each UI provides different levels of control, automation, and accessibility depending on the user's needs.

Prerequisites

Now it is time to get started. Before you can use Stable Diffusion WebUI Docker, you will need to install Docker, set up a Docker environment and install other necessary dependencies.

If you are on Windows, ensure you have the following:

- Docker Desktop (Check Docker Desktop Installation Guide)

- NVIDIA drivers & CUDA (for GPU acceleration)

- At least 8GB VRAM (Recommended)

- Basic familiarity with Docker commands

- Git installed (Check Git Installation Guide)

If you are on Linux, ensure you have the following:

- Docker installed (Check Docker Installation Guide)

- NVIDIA drivers & CUDA (for GPU acceleration)

- At least 8GB VRAM (Recommended)

- Basic familiarity with Docker commands

- Git installed (Use the system package manager to install Git, for example,

sudo apt install git)

Installation

Open a command prompt (cmd on Windows, Terminal on Linux) and navigate to the directory where you want to clone the repository. If you are on Windows, use cd to navigate, for example, cd C:\Users\yourname\ai. If you are on Linux, use cd to navigate, for example, cd /home/yourname/ai.

To get started, clone the repository and build the Docker container:

git clone https://github.com/AbdBarho/stable-diffusion-webui-docker.git

docker compose --profile download up --build

# then

docker compose --profile auto up --build

# you can replace `auto` with `auto-cpu`, `comfy`, or `comfy-cpu`

When it is done, you should see something like the following message:

$ docker compose --profile auto up --build

[+] Building 28.5s (27/27) FINISHED docker:default

=> [auto internal] load build definition from Dockerfile 0.0s

=> => transferring dockerfile: 3.09kB 0.0s

=> WARN: FromAsCasing: 'as' and 'FROM' keywords' casing do not match (li 0.0s

=> [auto internal] load metadata for docker.io/pytorch/pytorch:2.3.0-cud 1.5s

=> [auto internal] load metadata for docker.io/alpine/git:2.36.2 1.5s

=> [auto internal] load .dockerignore 0.0s

=> => transferring context: 2B 0.0s

=> [auto internal] load build context 0.0s

=> => transferring context: 3.18kB 0.0s

=> [auto download 1/9] FROM docker.io/alpine/git:2.36.2@sha256:ec491c893 0.0s

=> [auto stage-1 1/12] FROM docker.io/pytorch/pytorch:2.3.0-cuda12.1-cu 0.0s

=> CACHED [auto stage-1 2/12] RUN --mount=type=cache,target=/var/cache/ 0.0s

=> CACHED [auto stage-1 3/12] RUN --mount=type=cache,target=/root/.cach 0.0s

=> [auto stage-1 4/12] RUN --mount=type=cache,target=/root/.cache/pip 1.8s

=> CACHED [auto download 2/9] COPY clone.sh /clone.sh 0.0s

=> CACHED [auto download 3/9] RUN . /clone.sh stable-diffusion-webui-ass 0.0s

=> CACHED [auto download 4/9] RUN . /clone.sh stable-diffusion-stability 0.0s

=> CACHED [auto download 5/9] RUN . /clone.sh BLIP https://github.com/sa 0.0s

=> CACHED [auto download 6/9] RUN . /clone.sh k-diffusion https://github 0.0s

=> CACHED [auto download 7/9] RUN . /clone.sh clip-interrogator https:// 0.0s

=> CACHED [auto download 8/9] RUN . /clone.sh generative-models https:// 0.0s

=> CACHED [auto download 9/9] RUN . /clone.sh stable-diffusion-webui-ass 0.0s

=> [auto stage-1 5/12] COPY --from=download /repositories/ /stable-diff 0.1s

=> [auto stage-1 6/12] RUN mkdir /stable-diffusion-webui/interrogate && 0.1s

=> [auto stage-1 7/12] RUN --mount=type=cache,target=/root/.cache/pip 19.4s

=> [auto stage-1 8/12] RUN apt-get -y install libgoogle-perftools-dev & 4.3s

=> [auto stage-1 9/12] COPY . /docker 0.0s

=> [auto stage-1 10/12] RUN sed -i 's/in_app_dir = .*/in_app_dir = Tru 0.1s

=> [auto stage-1 11/12] WORKDIR /stable-diffusion-webui 0.1s

=> [auto] exporting to image 0.9s

=> => exporting layers 0.9s

=> => writing image sha256:9fb2e1b4b8fd548ed75dd3bc9834470e0836c986a97e9 0.0s

=> => naming to docker.io/library/sd-auto:78 0.0s

=> [auto] resolving provenance for metadata file 0.0s

[+] Running 2/2

✔ auto Built 0.0s

✔ Container webui-docker-auto-1 Recre... 0.1s

Attaching to auto-1

auto-1 | /stable-diffusion-webui

auto-1 | total 772K

auto-1 | drwxr-xr-x 1 root root 4.0K Feb 5 01:44 .

auto-1 | drwxr-xr-x 1 root root 4.0K Feb 5 01:44 ..

auto-1 | -rw-r--r-- 1 root root 48 Feb 5 01:27 .eslintignore

auto-1 | -rw-r--r-- 1 root root 3.4K Feb 5 01:27 .eslintrc.js

auto-1 | drwxr-xr-x 8 root root 4.0K Feb 5 01:27 .git

auto-1 | -rw-r--r-- 1 root root 55 Feb 5 01:27 .git-blame-ignore-revs

auto-1 | drwxr-xr-x 4 root root 4.0K Feb 5 01:27 .github

auto-1 | -rw-r--r-- 1 root root 521 Feb 5 01:27 .gitignore

auto-1 | -rw-r--r-- 1 root root 119 Feb 5 01:27 .pylintrc

auto-1 | -rw-r--r-- 1 root root 84K Feb 5 01:27 CHANGELOG.md

auto-1 | -rw-r--r-- 1 root root 243 Feb 5 01:27 CITATION.cff

auto-1 | -rw-r--r-- 1 root root 657 Feb 5 01:27 CODEOWNERS

auto-1 | -rw-r--r-- 1 root root 35K Feb 5 01:27 LICENSE.txt

auto-1 | -rw-r--r-- 1 root root 13K Feb 5 01:27 README.md

auto-1 | -rw-r--r-- 1 root root 146 Feb 5 01:27 _typos.toml

auto-1 | drwxr-xr-x 2 root root 4.0K Feb 5 01:27 configs

auto-1 | drwxr-xr-x 2 root root 4.0K Feb 5 01:27 embeddings

auto-1 | -rw-r--r-- 1 root root 167 Feb 5 01:27 environment-wsl2.yaml

auto-1 | drwxr-xr-x 2 root root 4.0K Feb 5 01:27 extensions

auto-1 | drwxr-xr-x 13 root root 4.0K Feb 5 01:27 extensions-builtin

auto-1 | drwxr-xr-x 2 root root 4.0K Feb 5 01:27 html

auto-1 | drwxr-xr-x 2 root root 4.0K Feb 5 01:44 interrogate

auto-1 | drwxr-xr-x 2 root root 4.0K Feb 5 01:27 javascript

auto-1 | -rw-r--r-- 1 root root 1.3K Feb 5 01:27 launch.py

auto-1 | drwxr-xr-x 2 root root 4.0K Feb 5 01:27 localizations

auto-1 | drwxr-xr-x 7 root root 4.0K Feb 5 01:27 models

auto-1 | drwxr-xr-x 7 root root 4.0K Feb 5 01:27 modules

auto-1 | -rw-r--r-- 1 root root 185 Feb 5 01:27 package.json

auto-1 | -rw-r--r-- 1 root root 841 Feb 5 01:27 pyproject.toml

auto-1 | drwxr-xr-x 8 root root 4.0K Feb 5 01:20 repositories

auto-1 | -rw-r--r-- 1 root root 49 Feb 5 01:27 requirements-test.txt

auto-1 | -rw-r--r-- 1 root root 371 Feb 5 01:27 requirements.txt

auto-1 | -rw-r--r-- 1 root root 42 Feb 5 01:27 requirements_npu.txt

auto-1 | -rw-r--r-- 1 root root 645 Feb 5 01:27 requirements_versions.txt

auto-1 | -rw-r--r-- 1 root root 411K Feb 5 01:27 screenshot.png

auto-1 | -rw-r--r-- 1 root root 6.1K Feb 5 01:27 script.js

auto-1 | drwxr-xr-x 2 root root 4.0K Feb 5 01:27 scripts

auto-1 | -rw-r--r-- 1 root root 43K Feb 5 01:27 style.css

auto-1 | drwxr-xr-x 4 root root 4.0K Feb 5 01:27 test

auto-1 | drwxr-xr-x 2 root root 4.0K Feb 5 01:27 textual_inversion_templates

auto-1 | -rw-r--r-- 1 root root 670 Feb 5 01:27 webui-macos-env.sh

auto-1 | -rw-r--r-- 1 root root 84 Feb 5 01:27 webui-user.bat

auto-1 | -rw-r--r-- 1 root root 1.4K Feb 5 01:27 webui-user.sh

auto-1 | -rw-r--r-- 1 root root 2.3K Feb 5 01:27 webui.bat

auto-1 | -rw-r--r-- 1 root root 5.3K Feb 5 01:27 webui.py

auto-1 | -rwxr-xr-x 1 root root 11K Feb 5 01:27 webui.sh

auto-1 | Mounted .cache

auto-1 | Mounted config_states

auto-1 | mkdir: created directory '/stable-diffusion-webui/repositories/CodeFormer'

auto-1 | mkdir: created directory '/stable-diffusion-webui/repositories/CodeFormer/weights'

auto-1 | Mounted .cache

auto-1 | Mounted embeddings

auto-1 | Mounted config.json

auto-1 | Mounted models

auto-1 | Mounted styles.csv

auto-1 | Mounted ui-config.json

auto-1 | Mounted extensions

auto-1 | Installing extension dependencies (if any)

auto-1 | /opt/conda/lib/python3.10/site-packages/timm/models/layers/__init__.py:48: FutureWarning: Importing from timm.models.layers is deprecated, please import via timm.layers

auto-1 | warnings.warn(f"Importing from {__name__} is deprecated, please import via timm.layers", FutureWarning)

auto-1 | Calculating sha256 for /stable-diffusion-webui/models/Stable-diffusion/sd-v1-5-inpainting.ckpt: Running on local URL: http://0.0.0.0:7860

auto-1 |

auto-1 | To create a public link, set `share=True` in `launch()`.

auto-1 | Startup time: 3.9s (import torch: 1.6s, import gradio: 0.5s, setup paths: 0.6s, initialize shared: 0.1s, other imports: 0.2s, load scripts: 0.2s, create ui: 0.3s).

auto-1 | c6bbc15e3224e6973459ba78de4998b80b50112b0ae5b5c67113d56b4e366b19

auto-1 | Loading weights [c6bbc15e32] from /stable-diffusion-webui/models/Stable-diffusion/sd-v1-5-inpainting.ckpt

auto-1 | Creating model from config: /stable-diffusion-webui/configs/v1-inpainting-inference.yaml

auto-1 | /opt/conda/lib/python3.10/site-packages/huggingface_hub/file_download.py:795: FutureWarning: `resume_download` is deprecated and will be removed in version 1.0.0. Downloads always resume when possible. If you want to force a new download, use `force_download=True`.

auto-1 | warnings.warn(

vocab.json: 100% 961k/961k [00:00<00:00, 1.45MB/s]

merges.txt: 100% 525k/525k [00:00<00:00, 2.55MB/s]

special_tokens_map.json: 100% 389/389 [00:00<00:00, 3.79MB/s]

tokenizer_config.json: 100% 905/905 [00:00<00:00, 3.61MB/s]

config.json: 100% 4.52k/4.52k [00:00<00:00, 14.4MB/s]

auto-1 | Applying attention optimization: xformers... done.

auto-1 | Model loaded in 42.1s (calculate hash: 35.2s, load weights from disk: 1.3s, create model: 4.1s, apply weights to model: 1.0s, apply half(): 0.4s, calculate empty prompt: 0.1s).

Then start the app on http://localhost:7860/.

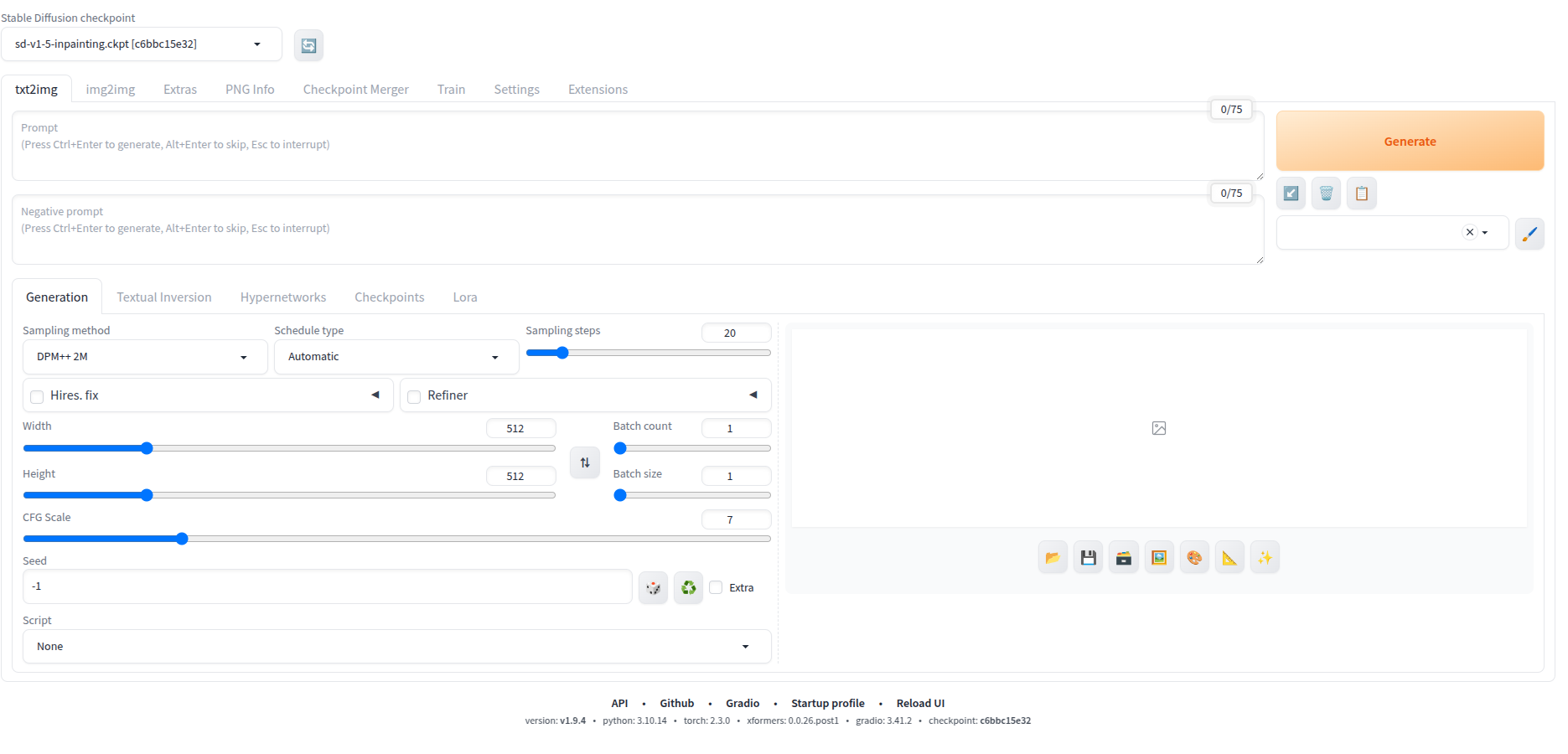

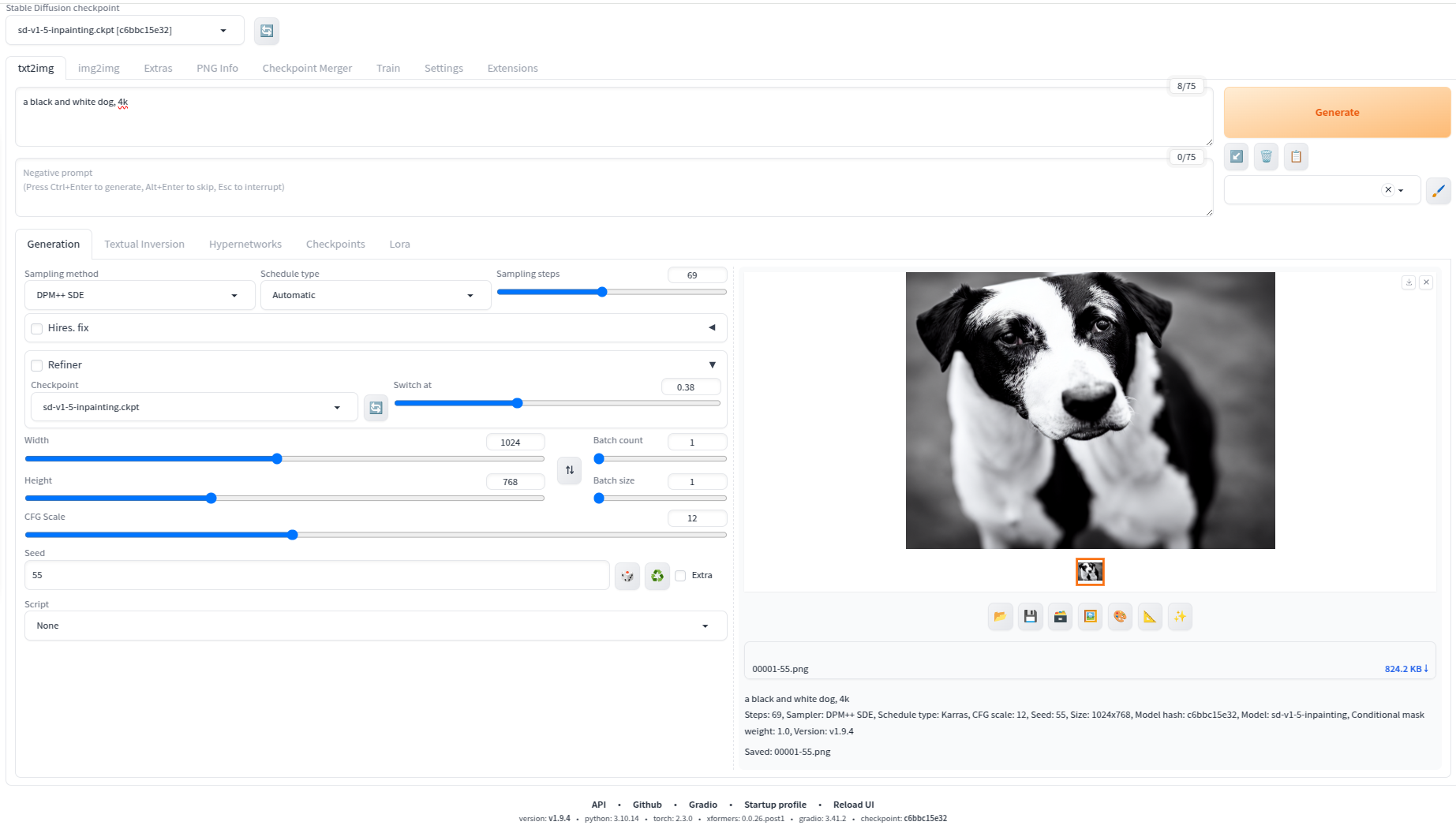

Now time to generate images! Type in the prompt:

a black and white dog, 4k

The parameters used to generate this image are:

Steps: 69

Sampler: DPM++ SDE

Schedule type: Karras

CFG scale: 12

Seed: 55

Size: 1024x768

Model hash: c6bbc15e32

Model: sd-v1-5-inpainting

Conditional mask weight: 1.0

Version: v1.9.4

Here is the saved image:

She actully really looks like my dog Bryson!

You can tweak the parameters and find out what works best for you. The common parameters are:

prompt: The text prompt for the imagenegative_prompt: The negative text prompt for the imagesteps: The number of steps for the diffusion processcfg_scale: The configuration scale for the imageseed: The seed for the image generation

Please note after you click on the "Save" button, the images will be saved in the output|saved folder of the Stable Diffusion WebUI Docker directory.

Troubleshooting

Error response from daemon: could not select device driver "nvidia" with capabilities:

The solution is to install the nvidia container toolkit, please see this link:

curl -fsSL https://nvidia.github.io/libnvidia-container/gpgkey | sudo gpg --dearmor -o /usr/share/keyrings/nvidia-container-toolkit-keyring.gpg \

&& curl -s -L https://nvidia.github.io/libnvidia-container/stable/deb/nvidia-container-toolkit.list | \

sed 's#deb https://#deb [signed-by=/usr/share/keyrings/nvidia-container-toolkit-keyring.gpg] https://#g' | \

sudo tee /etc/apt/sources.list.d/nvidia-container-toolkit.list

sudo apt-get update

sudo apt-get install -y nvidia-cuda-toolkit

ImportError: cannot import name 'TypeIs' from 'typing_extensions' (/opt/conda/lib/python3.10/site-packages/typing_extensions.py)

As this issue reported here, the solution is to downgrade typing_extensions as sugged by a github user yasu-nxt:

- Locate the Dockerfile of WebUI by AUTOMAIC1111

./services/AUTOMATIC1111/Dockerfile - Find the line like below:

RUN \

git clone https://github.com/AUTOMATIC1111/stable-diffusion-webui.git && \

cd stable-diffusion-webui && \

git reset --hard v1.9.4 && \

pip install -r requirements_versions.txt

Update this line and insert the following content:

RUN \

pip uninstall -y typing_extensions && \

pip install typing_extensions==4.11.0

Previous Article

Oct 03, 2021

Setting up Ingress for a Web Service in a Kubernetes Cluster with NGINX Ingress Controller

A simple tutorial that helps configure ingress for a web service inside a kubernetes cluster using NGINX Ingress Controller

Next Article

Jan 31, 2025

Using Math Equations and LaTex Expressions in MDX Documents with Next.js

In this post, I will show you how to use math equations and LaTex expressions in MDX documents with `next-mdx-remote`.